We’ve performed our fair share of document migrations. Each one is challenging but there are plenty of similarities, enough for us to have a standardised approach. Ben, our Director, has given presentations on our approach at conferences in the UK and the US. We’ve asked him to write a guide to help others, and over the next few weeks we’ll be released selected sections that we think stand on their own. Later this year, we’ll release a complete document with diagrams and further tid-bits to help you plan and execute just about any document migration project.

For my first post, I’ll share my experience on how to assess the source data, to ensure the documents and metadata are well understood and 100% accounted for at the completion of the migration.

Carry Out an Information Audit

The goal of the information audit is to quantify and qualify the source data. The completed audit will also be used to plan initial size and growth of the new system, to determine the level of data cleansing required, and the general difficulty of the migration. This section will describe how I conduct an information audit.

Information Accessibility

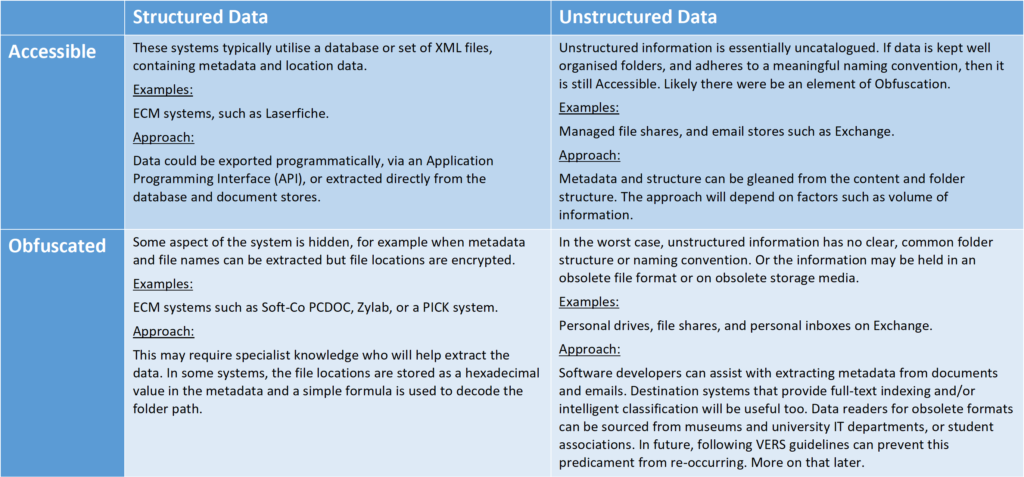

Your first task is to understand how accessible the data is, then what approach to take, and how difficult that might be. At Ingencia, we’ve created a grid to classify the type of system we’re migrating from.

Some source systems will be easy to migrate from (Structured and Accessible systems like Laserfiche), some less so (such as Obfuscated and Unstructured systems like a folder structures or email systems.) In my experience there is usually some element of obfuscation in even the most structured systems.

In which quadrant does your source information fall?

Data Dictionary

A Data Dictionary contains a detailed list of all of the fields types, and an analysis on the information they contain. I recommend creating a spreadsheet and include these headers as a minimum:

- Field Name

- Data Type

- Data Size

- System/User Defined

- Compulsory/Optional

- Repeating/Single

- For example, a publication can have multiple authors, making Author a Repeating Field

- Process Description

- Note how the field is populated, updated and used

- Security

- Record who has access to the field, what type of access, and under what conditions.

- Notes or Other Observations

Consider creating a corporate-wide Data Dictionary to provide consistency between all of your systems.

Additional Audit Outcomes

Build a complete picture of the source information. Try to understand how the information fits into into your records and data governance policies, what the business needs are, how the IT infrastructure will be designed, and what additional resources will be required. Use these questions to help guide you:

- How quickly did the source system grow and can future creation rates be extrapolated?

- How accessible is your information?

- Are any parts of the system encrypted or otherwise obfuscated?

- How much of it needs to be migrated?

- Can some information be destroyed before migration?

- How well do you or your staff understand the technology that stores the data?

- Do you have resident experts or will you need external consultants?

- Does the source system have an end-of-life date approaching?

- What metadata is used?

- Are all the fields used as designed, are they consistent with expectations, and used correctly on all documents?

- Can the destination system handle all of the data types from the source system?

- Are there fields that can be shrunk in the destination system?

- Are there fields that have been repurposed during the lifecycle of the source system?

- Can the metadata design be improved, such as by swapping free-text fields selection boxes?

As the migration project progresses, some of the answers to these questions may change a lot or completely.

The information audit will likely highlight additional resource requirements, such as the need to bring in additional developers. Laster posts will cover resourcing and migration methodologies, which tie nicely together.

Metrics

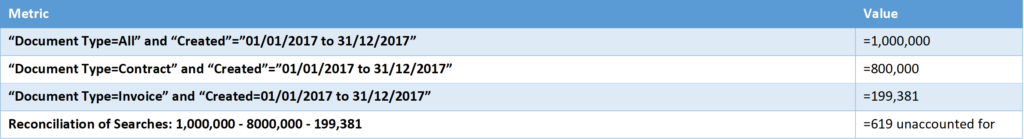

Metrics are required to reconcile the final migration numbers, plan the migration windows, and justify any skipped documents. As you generate your metrics, confirm your figures by running multiple searches of the same information using different criteria. For example:

- If the documents are organised by Document Type, find the number of documents by each document type, within a specific date range.

- Next, search for all documents within the date range.

- Finally, after running the search using the source system’s UI, run the search again using the API or database of the source system.

Cross checking your results using different criteria will help you spot bad metadata (or incomplete search criteria) that could cause documents to be unintentionally excluded.

When running the searches, make sure you’re using an account with full rights!

Building the extraction and migration procedure is an iterative process. As you progress, you may find metadata or document are missing, or doesn’t match your expectations, and requires further investigation. An information audit will provide the tools to assess the comprehensiveness of the extraction and the success of the migration.

Thanks for reading! We hope you found something useful here. Have we missed something? Would you do something differently? We’d love to hear what you have to say, and please feel free to reach out to Ben Ernsten-Birns or contact us through our contacts page.